Over the past year, we’ve noticed a growing phenomenon in AI search: customers finding us and our clients through long, heavily personalized conversations with AI. How do we know? Because we’ve asked them to share their chats.

While it’s great that customers are finding us and our clients through AI search, this creates the problem of reproducibility. How do you get more of these leads if they’re coming from extremely unique and personal conversations with, say, ChatGPT?

You can’t see these “AI rankings” in your AI visibility software. Peec, Profound, or our tool, Traqer are not going to show you the three-page conversation your recent lead had with ChatGPT. No one has that data (besides OpenAI, who obviously hasn’t revealed it). These are private, highly personalized conversations where the AI tool knows an astonishing amount about the user’s pain points and needs.

Company after company we talk to is saying they’re getting these leads, but they don’t know how. They don’t know exactly what customers are typing into LLMs, yet the leads keep coming.

We’re calling this phenomenon Invisible Prompts because you can’t see what they are.

But they’re very real, and as I’ll argue below, they’re only going to grow.

What Are Invisible Prompts?

It’s important to clarify that by “Invisible Prompts,” we don’t just mean prompts of a slightly different wording that you happen to not be tracking — like “what are some good content agencies?” versus “find me the best content agencies”.

It’s not as simple as just thinking of those alternative wordings and sticking them into your AI visibility tool, feeling like you have everything covered.

This is not what we mean. It’s not a wording issue. It’s much deeper.

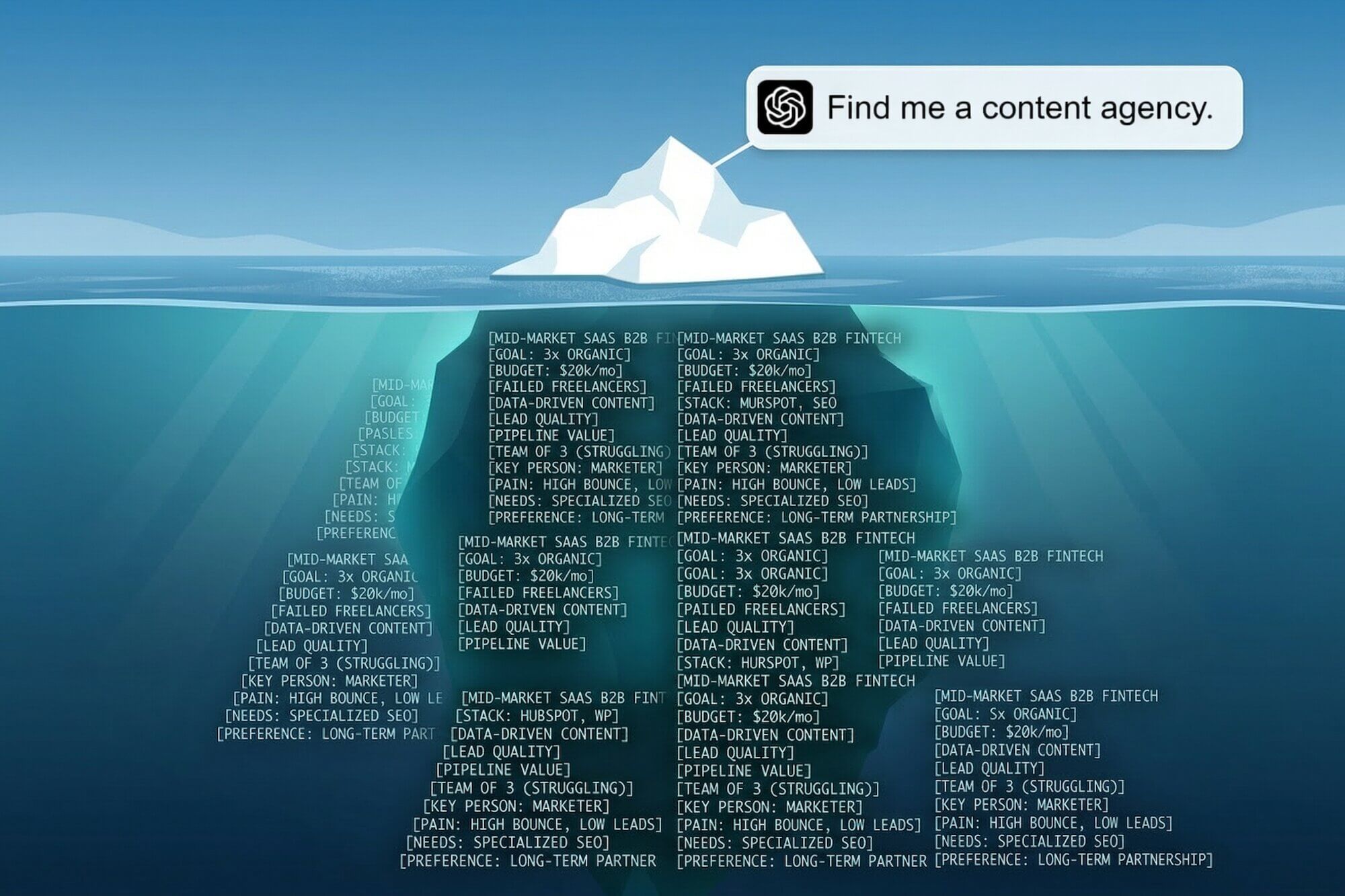

The issue is that even if a real user types in the exact wording you are tracking in some AI visibility tool, ChatGPT (or Claude or Gemini) will factor in a massive amount of heavily personalized information about your customer that you can’t predict. This extra personal information about the user will completely change what ChatGPT recommends to them.

We’ve started thinking about this as the difference between the literal prompt and the effective prompt. The literal prompt is what the user literally types into ChatGPT. The effective prompt includes all the additional information ChatGPT knows about them.

For example, one of our leads could type “find me a good content marketing agency”. What ChatGPT will actually do to generate an answer is take into account everything it knows about that customer — things like what size business they have, their budget, their industry, what they’ve tried in the past, who is on their team already, and more.

So, although their literal prompt may just be “find me a good content agency”, their effective prompt is more like a 1000-word essay on their business situation that ends with “…and so taking all that into account, what do you think would be a good content marketing agency for me?”

This is fundamentally not trackable. At least not in the “SEO style” that marketers are used to, where prompts are like keywords, each with some search volume.

What we’re saying is we are moving to a world where the search volume of most people’s prompts is one.

Your AI tracking tool isn’t surfacing these prompts to you — even ones like Profound that claim to have data from millions of “real” chats. That data is coming from people who have volunteered (knowingly or unknowingly) to have their web browsing tracked. Tools like Profound summarize patterns in that data, and then say “these prompts” have “this many” searches. So, fundamentally, it’s still the old SEO way of thinking: there are keywords and those keywords have certain search volumes.

What Profound or any other tool doesn’t know is the deep personalization underneath each prompt.

That personalization is what’s deciding whether or not you are recommended to the user.

Examples of Invisible Prompts

Back in early 2025, Benji wrote about how AI search would reward ultra-specific, bottom-of-funnel content. That post was an early version of this argument: that LLMs would factor in all this personal context when making recommendations.

In that post, he shared this example of one of our current clients who found us on ChatGPT. We asked her to share the chat where it recommended us. Here is her exact prompt and the beginning of ChatGPT’s answer, where it recommends us:

Her literal prompt was just: “What are the best SEO companies in your opinion who have driven massive results with endless leads for people in our kind of space?”

But you can see from the response that the effective prompt was longer. Look at how ChatGPT knows her space, knows her goals, knows her constraints. She didn’t actually type that in.

So here’s the issue: If we track this exact prompt in an AI visibility tool, we won’t see ourselves (Grow & Convert) mentioned, even though in real life, we were.

I actually tested this. I opened an incognito ChatGPT window and typed in her prompt, and even replaced “our kind of space” with “the business loans and funding space” to help it along.

The response is totally different from hers and doesn’t mention us at all, much less as the top option:

This is the visibility issue we’re talking about.

The real prompt — with all the context, nuance, and detail shaping whether ChatGPT, Claude, Gemini, or any other AI recommends you — is far longer, more detailed, and more personalized than you will ever see.

It’s invisible.

Why Invisible Prompts Are Only Going to Grow

This phenomenon, from what we can tell, is only growing as AI tools become more and more ingrained in everyone’s personal and professional lives and workflows. More people are logging in and staying logged in (Do you know anyone who uses ChatGPT or Claude regularly but in incognito?).

ChatGPT, Claude, and Gemini are building longer and longer histories of us. People are even using agents now and giving those agents access to their email and calendar. The context windows in these tools are growing (meaning they remember and factor in more information), and the tools themselves have memory features that remember things from all of your chats when answering new questions.

In other words, ChatGPT knows more about a user who’s been chatting with it for a year than one who just signed up. That history is context. And context changes recommendations.

All of which means: the gap between the literal prompt and the effective prompt is only getting wider.

How to Get More Invisible Prompt Leads

So what do you do to get more AI search leads if the true prompts are invisible? That’s what every company who observed this asks us.

Our answer is the same thing we’ve been saying since Benji’s AI search post last year: create ultra-specific content that teaches LLMs all the myriad use cases and pain points where your solution is a good fit. You need to feed LLMs the details they need to recommend you in specific scenarios even though you can’t predict the searches a priori.

For example, we didn’t know our financial sector client above would ask ChatGPT for an SEO agency, much less how. We didn’t create content specifically for her prompt. We’ve just been writing about how most content marketing does an awful job of generating leads, and how we fix that, for 10 years.

That’s what you need to do. Create content for as many specific use cases and scenarios as possible. Start with the places and ways you shine. Start with actual case studies. Then move to features, pain points you solve, and the specific situations where your product or service is the right fit.

This Is Why Owned Content is Tier 1 in Prioritized GEO

This is also exactly why we have “owned content” as the highest priority tier in our Prioritized GEO strategy.

Creating a variety of your own content, as opposed to chasing mentions in Reddit, gives you the room to discuss all the nuance and detail of where and when you shine, what pain points you solve, and more.

You can do this easily on your own site. On Reddit, what are you going to do? Drop your name in one sentence? That’s not enough context for an LLM to connect you to a specific user’s needs.

What Not to Do: Over-Focus on Single Prompt Visibility

It also explains why we de-emphasize Tier 3, the on-site content tweaks. Adding a schema or an LLMs.txt file won’t help you cover these unique scenarios. An FAQ might help at the margins, but what’s far more effective than that is actual detailed content.

It also means that trying to game the system and show up for a single really specific prompt doesn’t make sense, because no one is actually going to ask that exact prompt. If you produce a dedicated article for one narrow prompt and show up for it, it may look good inside AI visibility tracking software, so your client or management team might be happy. But possibly no one is going to have that exact exchange with an LLM, so it’s not likely to help in a real scenario.

How to Measure Progress

So is measurement totally hopeless? No. This is all still evolving, but we don’t think so. We’re actually leaning heavily into Topic-Based GEO to solve this, and here’s why.

While you can’t know the exact prompts people will use, you can observe, across clients and over time, trends in how much you’re showing up in AI search at the topic level.

We can see that clients with established brands and years of rock-solid SEO presence in their industry have visibility numbers like this:

While clients that are much younger, with newer domains, and without a long history of content and SEO presence, have numbers like this:

That matters.

And as per Topic-Based GEO, we can see this across topics. For example, our own AI visibility for “B2B content marketing services” versus “B2C content marketing services” looks completely different:

While we’ve had both B2B and B2C clients and our process works on both, we’ve had more B2B clients, have written more about B2B challenges, and published more B2B case studies. So the industry — and by extension, the LLMs — know us better in a B2B context, and that shows in visibility differences.

These topic-level differences are actionable. It means if we want to get more B2C clients, we need to start producing more content about our B2C work. That way, AI tools will have that information and context and be more likely to recommend us when someone who works at or owns a B2C business asks about content agencies.

That’s how you work invisible prompts in your favor. The prompts themselves may be invisible, but you can still monitor your presence when certain topics are broached.

Which takes me to a final analogy before we close.

Invisible Prompts Are Digital Word of Mouth

This is all curiously similar to the oldest and most effective marketing channel of all time: word of mouth.

Word of mouth is the holy grail of marketing and brand building. You eventually want to just be recommended whenever someone asks for a product or service in your space.

But think about what actually happens when you ask your friend for product recommendations. That conversation has all the nuance and detail about your specific situation behind it.

For example, if I ask Benji for surfboard recommendations, my literal question to him may just be “Hey what board should I buy?” but in his head, he’s going to apply the same complexity that ChatGPT does. He’ll factor in all he knows about me and my surfing: the fact that I’m a beginner, that I’m 6’6” tall, that I currently ride a 10’ foam board, and that I’m extremely handsome. All of it matters.

So while my literal question to him is short (“What board should I buy?”), the effective question he’s answering is much longer. It includes all the details he knows about my surfing.

That’s true if someone asks a friend about buying software, hiring a law firm, buying clothes or shoes, or even finding a good coffee shop.

AI search is similar. Just replace the friend or colleague who knows a lot about you with ChatGPT, Claude, or Gemini.

The only difference: Benji knows about surfboards from years of actually surfing. ChatGPT only knows what it has read. So if you want LLMs to recommend to you the way a knowledgeable friend would, you need to have published the content that gives them the context to do it.

You can’t predict the invisible prompts. But you can build the content foundation that gives LLMs enough detail to recommend you when those conversations happen.